Abstract

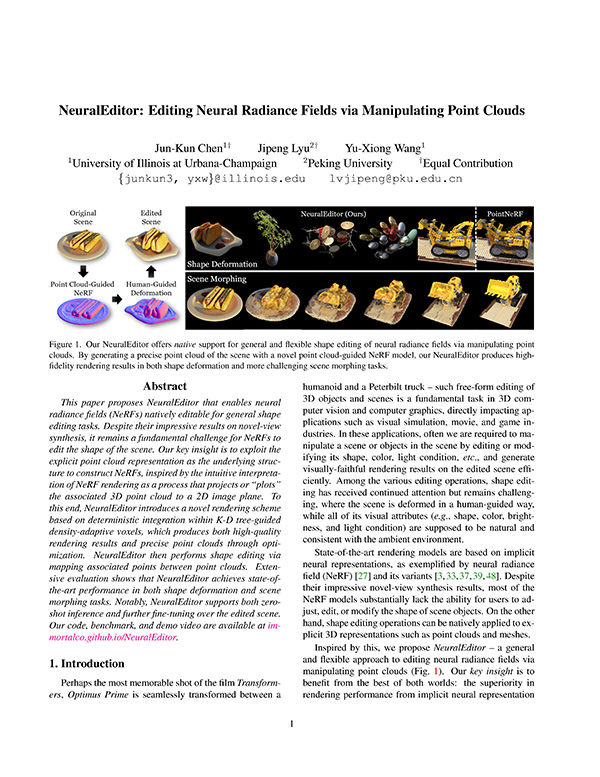

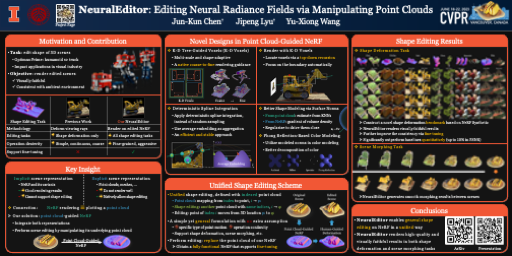

This paper proposes NeuralEditor that enables neural radiance fields (NeRFs) natively editable for general shape editing tasks. Despite their impressive results on novel-view synthesis, it remains a fundamental challenge for NeRFs to edit the shape of the scene. Our key insight is to exploit the explicit point cloud representation as the underlying structure to construct NeRFs, inspired by the intuitive interpretation of NeRF rendering as a process that projects or “plots” the associated 3D point cloud to a 2D image plane. To this end, NeuralEditor introduces a novel rendering scheme based on deterministic integration within K-D tree-guided density-adaptive voxels, which produces both high-quality rendering results and precise point clouds through optimization. NeuralEditor then performs shape editing via mapping associated points between point clouds. Extensive evaluation shows that NeuralEditor achieves state-of-the-art performance in both shape deformation and scene morphing tasks. Notably, NeuralEditor supports both zero-shot inference and further fine-tuning over the edited scene.

Architecture & Design

Shape Deformation

| PointNeRF (Baseline): Zero-Shot Inference | ||||

| NeuralEditor (Ours): Zero-Shot Inference | ||||

| NeuralEditor (Ours): Fine-Tuning | ||||

Scene Morphing

| PointNeRF (Baseline) | NeuralEditor (Ours) |

Citation

Acknowledgements

This work was supported in part by NSF Grant 2106825, NIFA Award 2020-67021-32799, the Jump ARCHES endowment, the NCSA Fellows program, the IBM-Illinois Discovery Accelerator Institute, the Illinois-Insper Partnership, and the Amazon Research Award. This work used NVIDIA GPUs at NCSA Delta through allocation CIS220014 from the ACCESS program. We thank the authors of NeRF for their help in processing Blender files of the NS dataset.

The website template is borrowed from RefNeRF.

We thank you and the other visitors for visiting our project page.